The fallacy of fixing bugs

Fallacy - a mistaken belief, especially one based on unsound argument.

When I first became a software developer more than 15 years ago, I was tasked with helping ship Office 2007 and improving the quality of the product. My manager at the time assigned over 100 bugs to me, and I dutifully fixed those bugs over the next 30 days. I was rewarded well for “saving the day”, but I had no idea to objectively know whether I improved the quality of the product. In a waterfall and boxed product model, you may be able to get away with this approach, but I’ve been surprised to see this pattern perpetuated for software that ships on a faster cadences.

We've all been there before. The engineering team is under pressure to write more features, and they lose discipline in keeping up with the bug backlog. The software has quality issues and/or your management team notices that the bug backlog volume is out of control. Time to spring into action! Fix all the bugs! Bug jail! Error budget! Late night working dinners! Stop all the features!

The result? Vanity metric improvement unlocked! Resume working on those features. We've fixed our quality problems. Nothing more to see here. Fast forward three to six months. The bugs are back, quality still hasn't improved, and the cycle of surging to squash all your bugs repeats.

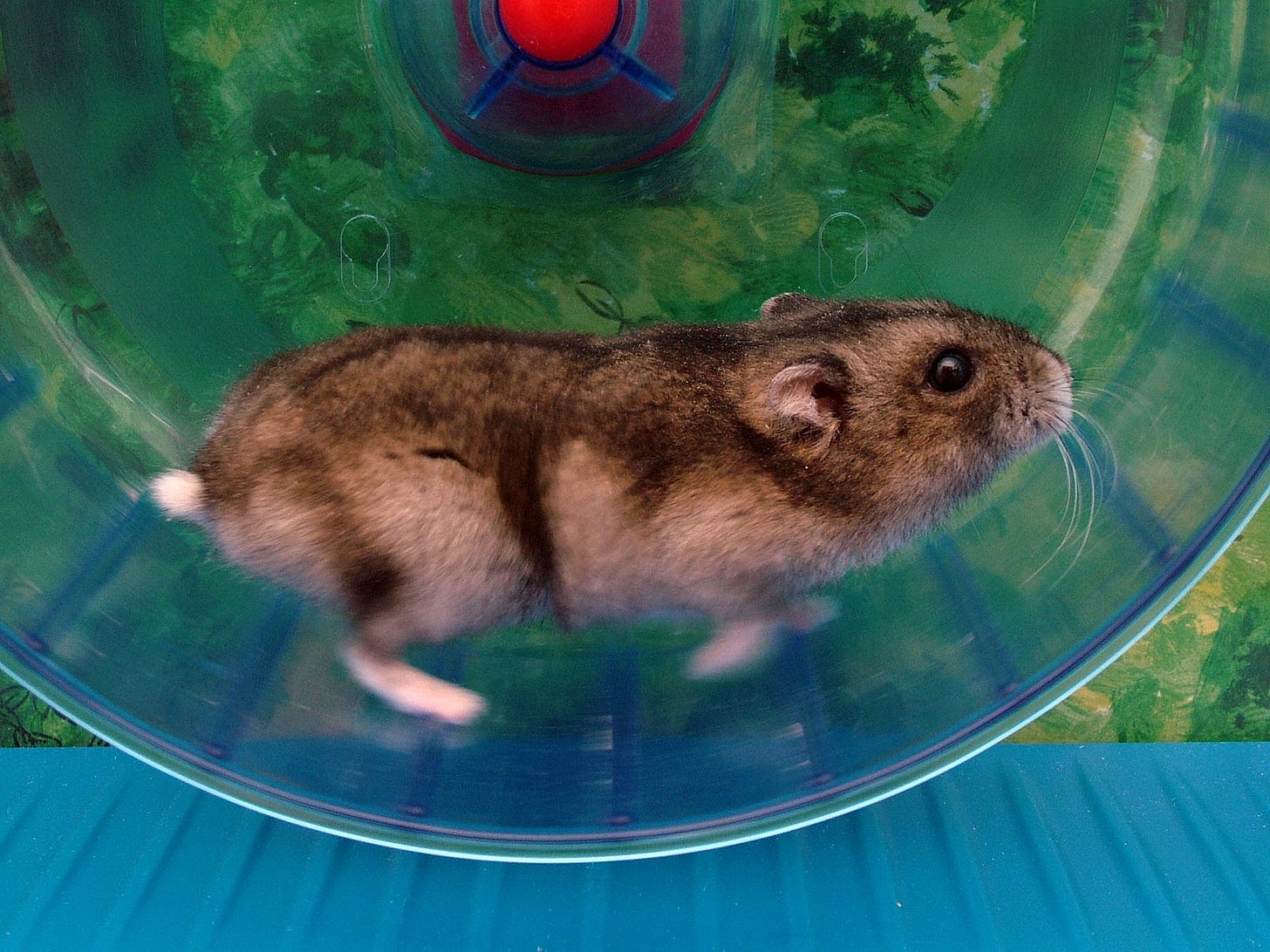

The proverbial hamster running in a wheel

The problem

Let's say you have 100 defects and 20 engineers working on them. Each engineer will take a narrow view of the issues assigned to them, fix them, and be wholly unaware of the issues the other engineers are fixing. This makes it much harder in hindsight to describe objectively how you improved the quality of the product aside from the poor vanity metric of "number of bugs fixed". An engineer will also fix an instance of potentially a much bigger class of issues, unaware that they may be fixing the least important instance. For example, fixing an error in the product that one internal user saw, rather than addressing the topmost error your paying customers saw. I have rarely seen this approach be the panacea to fixing product quality problems. Organizations make bug fixing a distributed problem rather than a centralized one with strategic ownership.

Here are four reasons why this doesn’t work:

No 1:1 mapping between bugs and quality. In reality, the bug backlog takes on many forms: issues (found internally and externally), technical debt, feature requests, and possibly more! Therein lies our fallacy that to improve quality, we must fix all the bugs. Defining a quality strategy to shift the perception of your customers is a much bigger, challenging, strategic problem beyond bug fixing, which I'll write about in a future post.

The team doesn't have a shared definition of a bug. Is it something that can be fixed in a few days? Is it only for shipped features? Is it just for functional bugs or for user experience issues? This lack of understanding makes it difficult to understand what problems you're solving once you've resolved the bugs.

Missing accountability and collaboration. Bugs are not just an engineering responsibility, they are typically product management and UX designer responsibilities as well (this three-legged stool of the product triad is essential). The engineers working on bugs are usually the same ones working on the features you want to ship, so if product management and designers lack understanding of which bugs the engineers are fixing, why they're fixing them, in what priority order, and what the impact is of said fixes, they are missing the bigger picture of how their scarce engineering resources are allocated.

Missing bug handling mechanisms. What are all the fields you use in your bug tracking system? Is there consistency in how they're used? Who creates and sends reports on the bug statistics? Does every area in the bug tracking system have a clear owner? How long is it acceptable to keep a bug open?

Why organizations continue to make these mistakes

Management gravitates towards vanity metrics and engineers love the sense of accomplishment of resolving an item that's assigned to them. It's very hard to un-see a bug once it enters the backlog, and the product triad lacks the conviction to “won’t fix” a known defect in the product.

And let's face it - figuring out how to describe a strategic approach to quality is a very hard problem, so we start with the obvious solutions to spring the team into action (fix the issues that are already there). Thinking strategically across an entire bug backlog requires higher-level management to do a deeper level of "trust and verify", to help see the seams between the potentially hundreds of engineers that will be working on the defects.

How we fix it

A high-level quality strategy that is defined objectively by a set of metrics that describe whether your users are having a delightful experience. These metrics should be both quantitative and qualitative. You can identify the most critical scenarios in your product, measure reliability and latency of those scenarios (apdex is great), track generic error rates, crashes, etc. Qualitative metrics like NPS, and verbatim user feedback help you understand whether your improvements in quantitative metrics results in subjective improvements from the customer point of view.

Having the right strategy and metrics will allow you to take bigger bets to meaningfully and objectively improve the quality in a much more measured way. Is it better to say after a month of dev work, "we improved the reliability and latency of the most critical task in the product" or "we fixed 15 bugs"?

If you are skeptical that prioritizing your quality improvements based on your metrics (instead of bugs) won't reflect a burndown in your bug backlog, then you have the wrong KPI's. Fix that problem instead.

Mechanisms and guiding principles. This should take the form of a living document (wiki) which is shared broadly across the organization and signed off by each product management triad. This should address:

Define what a bug is and whether it belongs in the defect backlog or somewhere else

Shared definition of severity and priority

Establish other critical metadata and how it should be used. "Found by", "Fix Version", "# of users impacted" - all of these help drive data-driven decision making.

Establish ownership of bug tracking hierarchies across product, engineering, and design (and maybe other disciplines)

Establish SLA's for untriaged bugs (bugs nobody has looked at) and bugs of certain sev/pri. It's expected that the feature team meets regularly to triage bugs.

A *centralized* burndown strategy. Group similar items into a theme and describe the before/after impact. For example, lump all "fit and finish" user experience issues into a high level tracking item and prioritize the entire set of issues together.

The guts to say no. You don’t have to fix a bug just because someone took the time to log it. I prefer "declaring bankruptcy" for all defects over a certain age to avoid the urge from the team to spend time on these. Your business and customers have moved on, and if you're relying on those old logged bugs to represent the highest priority set of items to fix, you're in trouble.

Postmortems. To prevent cyclical bug patterns, you have to understand why the bugs escaped to begin with. The goal should be to move quality upstream in the development process. Postmortems will likely lead you to improve your test automation, rings of validation, telemetry, and areas of the code that are ripe for refactoring.

If you're in an engineering leadership position and need some help in establishing these best practices in your organization, send me a message and I'll share some content and templates to save you some time!

As always great insights D - one thing I have learned is that engg teams should push back on the bug list from PM or customers as there is too much emphasis on “what and how many bugs” and very little clarity on “why” is it a bug. Once we know why it’s a bug we can see the impact of the bug and that helps determine the priority easier than saying all UI or visual bugs are Sev 3. When you see a typo maybe u push it out (I wouldn’t) but if it’s a typo in a product pricing number then it’s not a “typo bug” but a Sev 1 as it has a bigger impact.